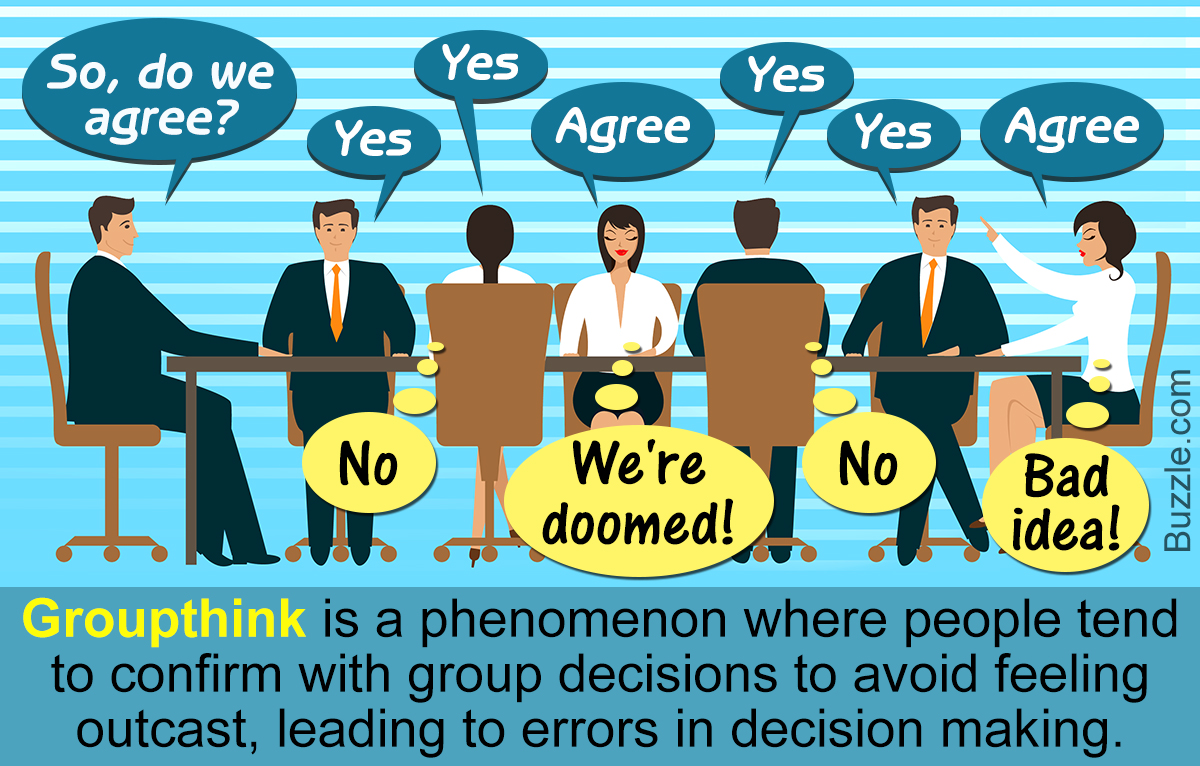

The biases and heuristics we’ve read about up to now all occur at the individual level. In other words, they all have to do with how an individual processes information and makes decisions. One suggestion for overcoming these biases is to have a group make important decisions. The idea is that the different biases and heuristics being used by the different individuals will cancel each other out, resulting in a more rational decision. While there is some evidence to support that claim, group decision making is not a panacea.

Do some reading on group decision making and make sure you do some reading on GroupThink. In addition, the following link is to an article about why groups make better decisions (sometimes). The article is titled “

For this week’s Packback:

1. Think about a work (not school) experience you had with an important group decision. Describe the experience (it is not necessary to disclose proprietary or confidential information), and, referencing your readings, discuss what worked and what did not in the process.

2. What did you find to be the most helpful idea from this semester? What did you learn that you didn’t know before that has changed or is changing how you make decisions (or at least how you think about making decisions)? Please be more specific than System 1 and System 2. We all know we make quick decisions and there are decisions where we take our time; so why was the idea of System 1 and System 2 so revelatory? If there was a specific bias or heuristic that caught your attention, what was it and why was it so interesting?

Do not forget to include the URL for the article on group decision making that you found.

Response to these post #1. Include a work cited

1.

Why do some teams successfully challenge individual biases while other fall into Groupthink?

An example of when a team challenged bias, and when it didn’t, occurred when I was in a previous role. I was asked to participate in a cross-functional decision about whether to shift a major supplier, after repeated delivery failures. The team included supply chain, operations, finance, and quality. On paper, this diversity should have strengthened the decision. Heterogeneous groups surface more information, correct errors more often, and reduce overconfidence. These types of actions are discussed in Why Diverse Teams are Smarter (HBR, 2016).

Early in the process, the diversity of expertise worked as intended. Quality challenged assumptions about long-term reliability, operations emphasized production risk, and supply chain highlighted lead-time variability. These competing perspectives forced the group to slow down and question initial impressions; an important counter to individual biases, anchoring or confirmation bias.

As the discussion progressed, switching suppliers was the obvious solution. Once finance framed the cost savings as decisive, the team’s voices shifted. They began to converge too quickly, and the pressure to align created the illusion of consensus. This is classic Groupthink; suppressed dissent, premature closure, and a shared belief that the group must be right because everyone else appears to agree.

The contrast within the same meeting was striking. The team initially challenged individual biases effectively; however, without structured safeguards, the group drifted into the very dynamic it was trying to avoid.

The most helpful idea from this semester that changed my thinking was the escalation of commitment. I understood sunk costs in theory, but I had not fully appreciated how strongly people cling to past investments when it’s evident a change is needed.

That single reframing has helped me detach from legacy decisions and evaluate choices more objectively. It has also helped me reinterpret the supplier decision as part of the team’s push for a switch was not just logic, but frustration from months of firefighting and owning the emotional investment to finally make a change.

Escalation of commitment stood out because it revealed how bias is not just fast thinking (System 1) versus slow thinking (System 2), but it also identifies the desire to appear consistent. Recognizing that has already changed how I approach both individual and group decisions.

Sources

- Why Diverse Teams Are SmarterWebsite | David Rock & Heidi Grant, 2016 |

- |

2. Respond to these posts #2. Include work cited

If diverse groups reduce bias, when does the pressure for unity start overriding the diversity of thought that makes groups effective?

One situation that comes to mind happened during a training and operational planning discussion at work. Early in the conversation, a few senior personnel strongly supported one specific plan. Once the room started leaning in that direction, most people quit pushing back even though there were still concerns about staffing, communication, and how realistic parts of the plan actually were. Some people brought up valid concerns early on, but after a while the conversation shifted more toward supporting the plan instead of critically evaluating it. The plan still worked overall, but several of the concerns that were initially mentioned ended up becoming problems later during execution.

Looking back on it now, I think the group fell into some level of groupthink. Nobody wanted to be the person slowing things down or openly disagreeing once the majority seemed set on a direction. At the same time, there were positives too. The group itself had people with different backgrounds and experience levels, which at first led to solid discussion and different perspectives being brought up. That connects to the article Why Diverse Teams Are Smarter, because the diversity of experience did improve the quality of the discussion early on. The bigger issue was that the environment slowly became less open to disagreement as the discussion continued. That also reminded me a lot of psychological safety from Teaming. If people don’t feel comfortable speaking up, the benefit of having a diverse group starts to disappear.

The idea that impacted me the most this semester was Kahnemans illusion of validity. Before this class, I usually thought poor decisions mostly came from lack of training, intelligence, or experience. I never really considered how experienced people can still make bad decisions while feeling extremely confident in them. That stood out to me because in emergency services, confidence is important, especially when things are moving fast. At the same time, this class made me realize confidence sometimes stops people from slowing down and questioning assumptions. I catch myself paying more attention now to the people who disagree or raise concerns because they may be seeing something the rest of the group is missing.

A well known example of this is the Challenger disaster. Engineers had concerns about the O-rings in cold weather conditions, but pressure to stay on schedule and maintain consensus overpowered those concerns. Looking back at it now, it is easy to see how groupthink and pressure within organizations can lead people to ignore warning signs even when experienced individuals are raising concerns.

Sources

- GroupthinkWebsite |

- How Groupthink Played a Role in The Challenger Disaster | Applied Social Psychol…Website |

- Thinking, Fast and SlowBook | Kahneman, 2011 | ISBN 978-0-374-27563-1

- Teaming: How Organizations Learn, Innovate, and Compete in the Knowledge EconomyBook | Edmondson, 2012 | ISBN 978-1118216743

Leave a Reply

You must be logged in to post a comment.